Recent open-world 3D representation learning methods using Vision-Language Models (VLMs) to align 3D data with image-text information have shown superior 3D zero-shot performance.

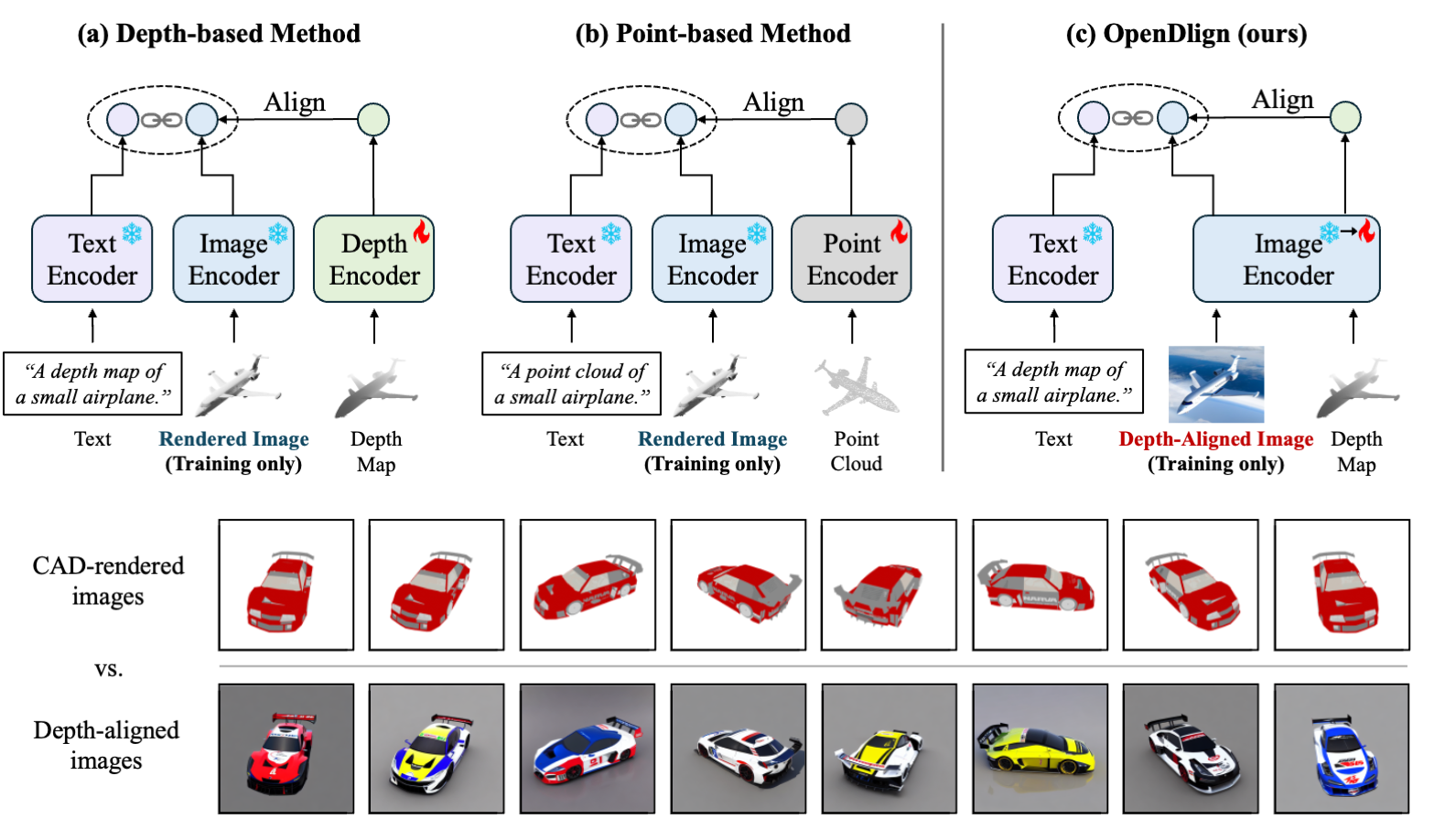

However, CAD-rendered images for this alignment often lack realism and texture variation, compromising alignment robustness. Moreover, the volume discrepancy between 3D and 2D pretraining datasets highlights the need for effective strategies to transfer the representational abilities of VLMs to 3D learning. In this paper, we present OpenDlign, a novel open-world 3D model using depth-aligned images generated from a diffusion model for robust multimodal alignment. These images exhibit greater texture diversity than CAD renderings due to the stochastic nature of the diffusion model. By refining the depth map projection pipeline and designing depth-specific prompts, OpenDlign leverages rich knowledge in pre-trained VLM for 3D representation learning.

Our experiments show that OpenDlign achieves high zero-shot and few-shot performance on diverse 3D tasks, despite only fine-tuning 6 million parameters on a limited ShapeNet dataset. In zero-shot classification, OpenDlign surpasses previous models by 8.0\% on ModelNet40 and 16.4\% on OmniObject3D. Additionally, using depth-aligned images for multimodal alignment consistently enhances the performance of other state-of-the-art models.

@article{mao2024opendlign,

title={OpenDlign: Enhancing Open-World 3D Learning with Depth-Aligned Images},

author={Mao, Ye and Jing, Junpeng and Mikolajczyk, Krystian},

journal={arXiv preprint arXiv:2404.16538},

year={2024}

}